I vividly remember sitting in a chaotic site office during a turnaround at a petrochemical plant, staring at a stack of "perfect" Job Hazard Analyses (JHAs). On paper, the risk of a nitrogen release had been mitigated to zero; the process was airtight, the valves were tagged, and the permits were signed. Yet, two hours earlier, I had stopped a contractor from unbolting the wrong flange because he was fatigued, the labeling was obscured by grime, and he was working off memory rather than the checklist. The process was robust, but the human element—the "people" side of the equation—had been completely bypassed by the theoretical risk assessment.

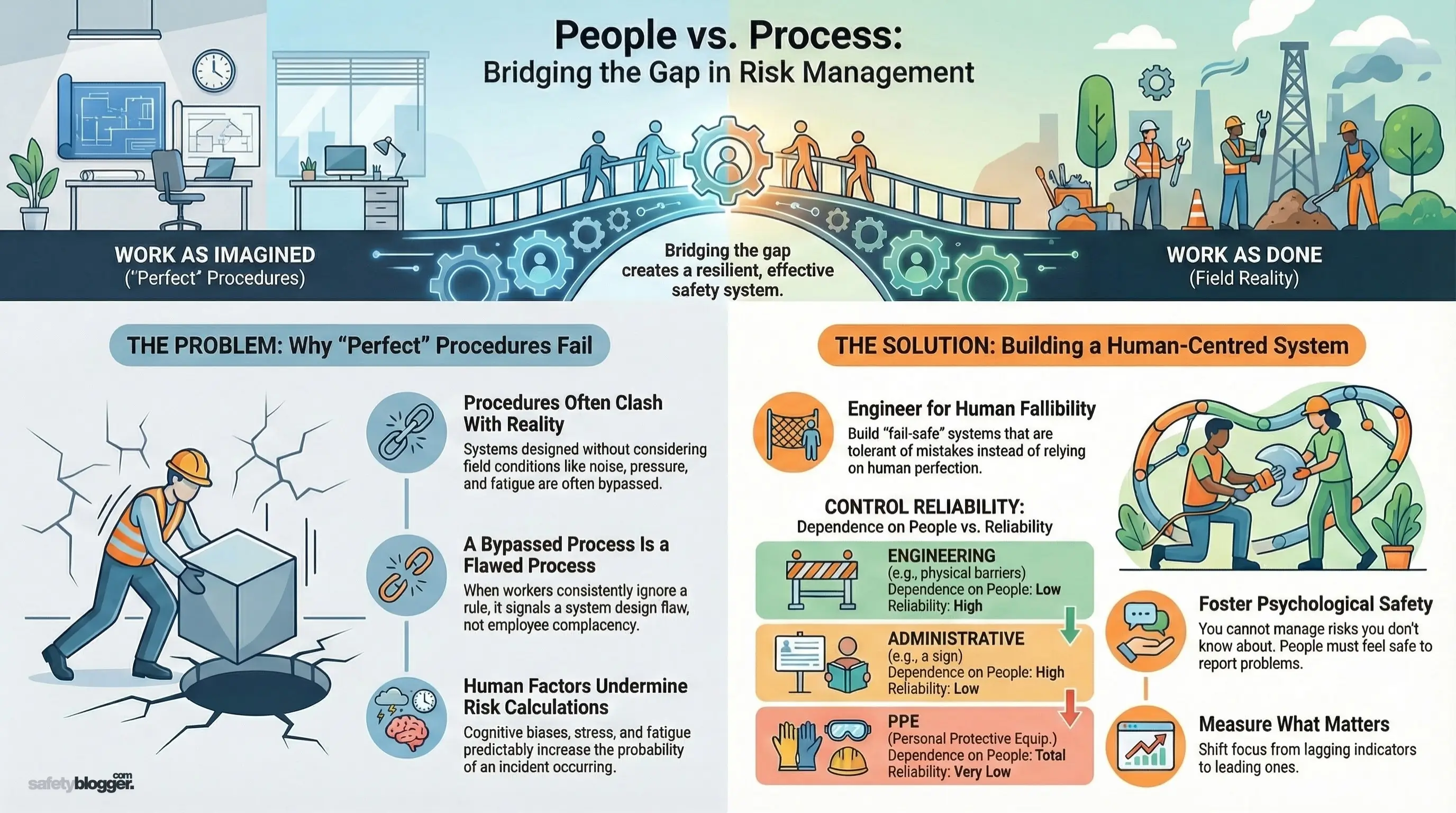

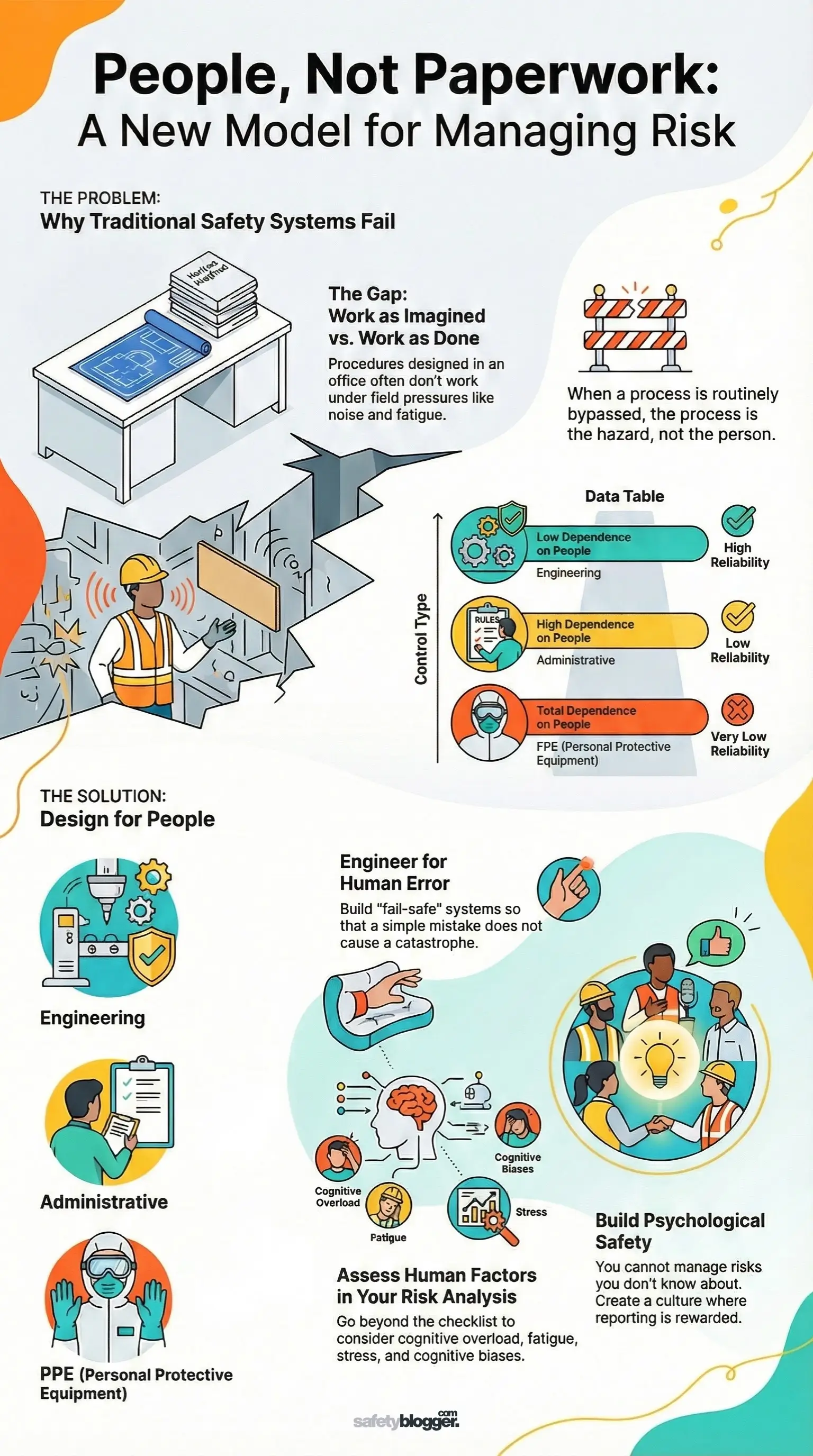

This disconnect between Work as Imagined (the process) and Work as Done (the people) is where the majority of catastrophic failures incubate. In this article, I will dismantle the traditional view that risk management is solely about paperwork and engineering controls. We will explore how to integrate human factors into your risk registers, why rigid processes often encourage unsafe behaviors, and how to build a safety management system that accounts for the fallibility of the human condition. This matters because a safety system that ignores human nature is not a shield; it is a ticking time bomb.

The Gap Between Procedure and Reality

In my years auditing ISO 45001 management systems, the most common failure I see is not a lack of procedure, but a procedure that is impossible to follow in the real world. When we design processes in a quiet, air-conditioned boardroom, we often fail to account for the mud, noise, time pressure, and physical constraints of the field.

If a procedure requires a worker to walk 20 minutes to fetch a specific tool for a 30-second task, human nature dictates they will improvise. We cannot simply label this as "complacency" or "violation." As risk managers, we must view these deviations as data. If a process is routinely bypassed, the process is the hazard, not the person.

Accessibility: Are controls physically accessible?

Clarity: Is the Safe System of Work (SSoW) written in the language of the crew?

Usability: Does the PPE required for the task actually inhibit the task (e.g., clumsy gloves for fine wiring)?

Feedback Loops: Do you punish bad news, or do you thank workers for highlighting unworkable procedures?

Pro Tip: During your next risk assessment review, take the draft to the shop floor. Ask the newest technician to explain how they would actually do the job. If their answer differs from your document, update the document—don't just lecture the technician.

Human Factors in Risk Assessment

We often calculate risk using the standard formula: Risk = Severity X Probability. However, we frequently underestimate the "Probability" variable because we assume people function like machines. We assume they will see the warning sign, hear the alarm, and follow the steps in order. In reality, cognitive bias, fatigue, and stress significantly alter risk perception.

I once investigated a crane incident where the rigger signaled a lift despite the load not being centered. He wasn't reckless; he was suffering from "completion bias"—the overwhelming psychological urge to finish a task that is nearly done, especially near the end of a shift. Effective risk management must identify these psychological precursors.

Key Human Factors to Assess:

Cognitive Overload: Is the operator managing too many alarms or inputs simultaneously?

Physical Capability: Does the task require sustained awkward postures leading to fatigue?

Environmental Stressors: How do heat, noise, or poor lighting affect decision-making?

Groupthink: Is the crew likely to agree with a dominant supervisor even if they see a hazard?

Engineering Out the Human Error

The Hierarchy of Controls is our primary tool for managing risk, but we often rely too heavily on the bottom tiers—Administrative Controls and PPE—which are entirely dependent on human reliability. As a Risk Management Specialist, my goal is to move controls up the hierarchy so that human error does not result in injury.

If a worker forgets to close a valve, the system should not explode. This is the core of "Safety by Design." We must build tolerance into our systems so that mistakes are survivable. This is often referred to as "fail-safe" engineering.

Comparison of Control Reliability:

Control Type | Dependence on People | Reliability | Example (Chemical Handling) |

Elimination | None | 100% | Automating the mixing process; removing workers from the zone. |

Engineering | Low | High | Interlocks that prevent the hatch from opening while pressurized. |

Administrative | High | Low | A "Do Not Open" sign or a training session on pressure safety. |

PPE | Total | Very Low | Chemical resistant suit and respirator. |

The Role of Psychological Safety in Risk Management

You cannot manage a risk you do not know about, and you will not know about risks if your people are afraid to speak up. I have seen organizations with impeccable safety manuals crumble because their culture silenced dissent. If a worker feels that reporting a near-miss will result in a drug test or disciplinary action, they will hide the incident.

When we drive risk management underground, we lose our early warning signals. A robust risk management system requires "Psychological Safety"—an environment where team members feel safe to take risks and be vulnerable in front of each other. This is not "soft" science; it is a hard operational necessity.

Normalize Fallibility: Leaders must admit their own safety mistakes to set the tone.

Reward Reporting: Celebrate the "good catch" rather than just tracking the "bad accident."

Stop Work Authority: Ensure this is a reality, not just a slogan. If a junior employee stops a site manager, back them up publicly.

Pro Tip: Stop tracking "Zero Harm" or "Zero LTI" as your primary success metric. These are lagging indicators that often encourage non-reporting. Start tracking leading indicators, such as the number of risk assessments reviewed or the number of safety observations raised by the workforce.

Conclusion

Managing risk is not about creating a paper trail to defend the company in court; it is about creating a reality where it is difficult to get hurt. We must stop treating people as the "problem" to be controlled and start viewing them as the "solution" to be engaged. A process that looks good on a laptop but fails in the mud is a failed process.

True risk management requires us to be humble enough to admit that our systems are imperfect and that our workers are human. By designing for human fallibility and fostering a culture where the truth can be spoken without fear, we move beyond compliance and toward genuine safety. We owe it to the families waiting at home to ensure that our understanding of risk matches the reality of the field.

Comments

Loading...